Decentralized IPFS Discovery Directory

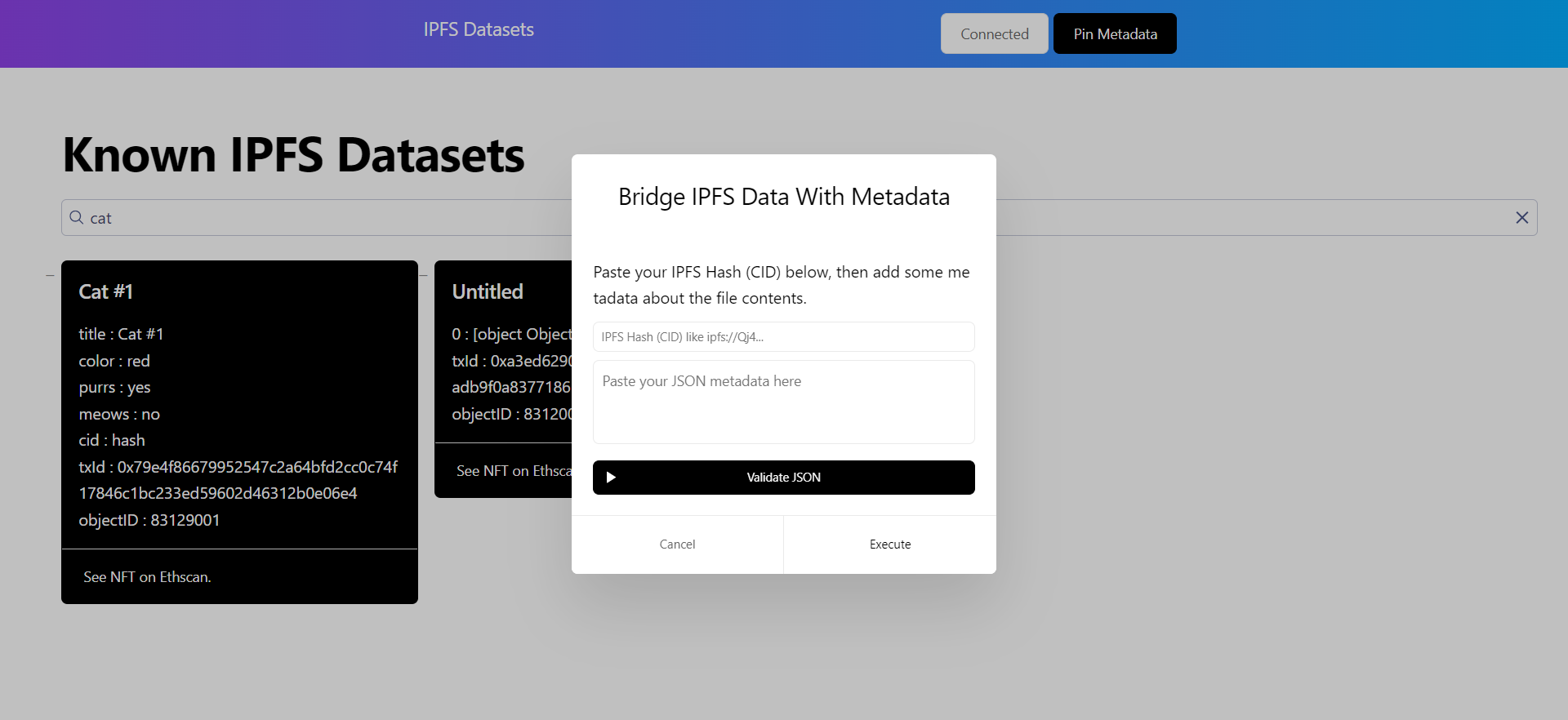

Keeping track of files on IPFS is hard. Our projects enables binding of custom metadata to any IPFS dataset (IPFS Hash) for easier tracking & discovery.

Project Description

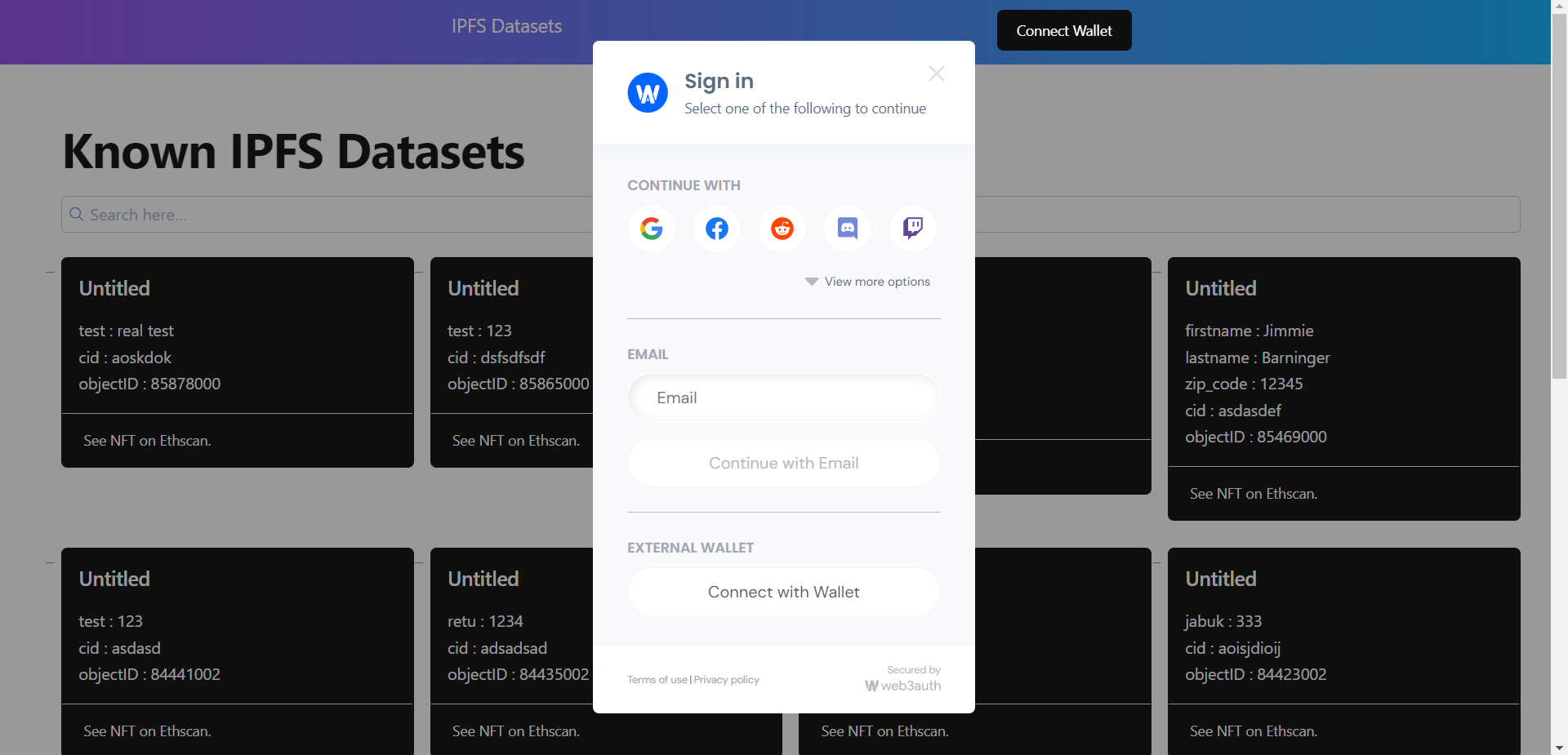

Keeping track of files on IPFS is hard. Our project aims to make files and datasets on IPFS more easily discoverable & searchable. Any user who authenticates via web3auth on the React webapp can submit an IPFS hash (which they want to bind certain metadata to) and the said metadata in JSON format. All happening on the frontend, metadata is uploaded as a .json file to IPFS (API powered by Tatum) and a new hash is generated for the metadata.json file. After getting both the dataset IPFS hash and metadata IPFS hash, an NFT is minted (powered by Express NFT minting API from Tatum) to an ETH wallet representing the webapp's archive. Metadata is then uploaded to Algolia (AI powered React Search component), which creates search indexes based on all submitted metadata. Said search component is then available in the webapp to query keywords and discover relevant datasets/files hosted on IPFS. We really wanted to add social features such as saving datasets for later and up/down vote features, but sadly ran out of time - would need a modified ERC721. Custom blockchain crawlers could also be implemented together with AI to make up metadata by analyzing the files on IPFS itself - somewhat like a Google for IPFS files.

How it's Made

The project was developed as a React webapp. It implements Geist-UI and Algolia React components. Algolia uses smart search components which connect to their backend services where metadata is processed and indexed (saves us a ton of time!). We use web3auth to authenticate users who want to submit their metadata - however, currently minting is done via Express NFT API due to time constraints (again, saved us a TON of time, got in working in like 5min). However, for a more feature rich platform an extension of the basic ERC721 contract would be needed - such as saving/upvote/downvote. We make use of the IPFS upload API. Surprisingly, the hardest part of this project was uploading a JSON (in Javascript) to IPFS - a file upload is needed. We kinda 'hacked' our way through this using a backend Flask server to save the json to filesystem and upload via Tatum.