Hashscan

Hashscan is an aggregated web3 user analytics. It's focuses on users rather than protocols. It allows to compose user segment from on-chain activity and get event-based analytics for that segment

Project Description

Hashscan is an aggregated web3 user analytics. It allows to compose a segment of users based on filters and view event analytics for this user segment. Our goal was to build analytics mostly focused on users rather than projects. We also wanted to make a web3 analytics that’s easier to use than Dune yet composable.

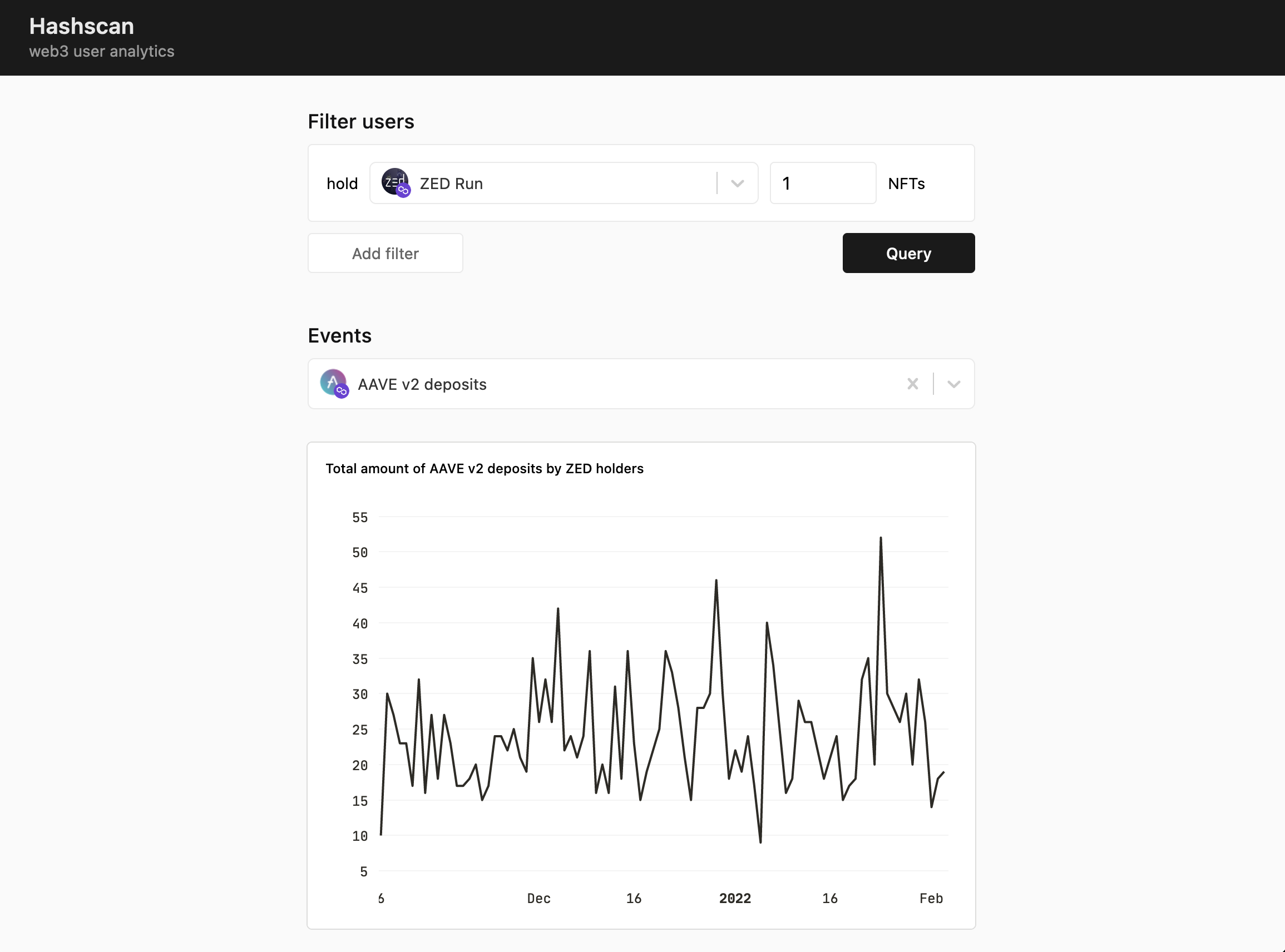

In this demo we focused on several aggregated analytics use-cases related to DeFi and NFTs. For example, you can use Hashscan to view how many times LobsterDAO members deposited to Aave over the last months. You can also check how many users has Full Membership of Friends With Benefits, and whether there are active LooksRare traders or not.

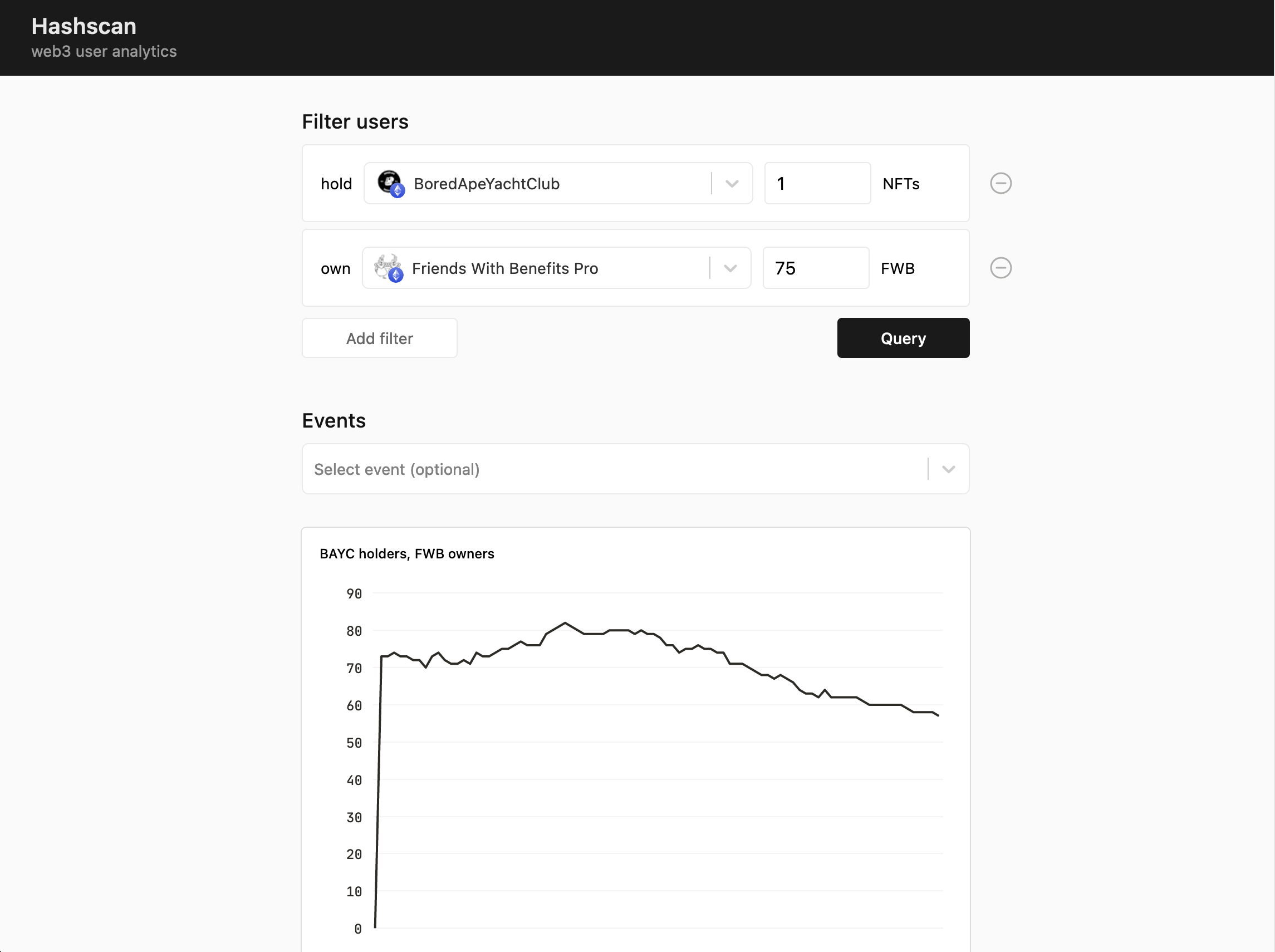

First, you need to compose a user segment by choosing filters. There are several types of filters: users owning NFT tokens, users holding the required amount of ERC20 tokens. Another kind of user filter is event-based, for example segment of users who traded on OpenSea in the last month.

After composing a user segment you can see how many users in this segment and how this amount has changed over time. By selecting an event, you will see a total amount of events called by this user segment.

Building aggregated on-chain analytics is hard you need to index and query a lot of data. To make it work fast and potentially scalable, we had to fetch a lot of data into our database from multiple data providers. We also used Clickhouse instead of relational databases to make queries fast.

In this demo we supported filters for a number of NFTs and ERC20 tokens from Polygon and Ethereum. As both blockchains are EVM-compatible, you can choose a user segment based on holding tokens on Ethereum network but view analytics for an event on Polygon. This is cool.

In the future, we plan to support event-based user segmentation, analytics for more events, and more parameters for charts.

How it's Made

Hashscan consists of a frontend app, backend API, Clickhouse database, and scripts to fetch various kinds of data from multiple data providers.

To calculate token owners at a specific point of time initially we used transfer events of ERC20/ERC721/ERC1155 tokens, but then switched to Covalent API – they have an endpoint exactly for that. To display aggregated event analytics we fetched smart contract events from an event-specific Covalent API endpoints. To get real-time event updated we used Moralis Smart Contract Events. We also used BitQuery for fetching data for several on-chain events.

Token holders and smart contract event data was then stored in Clickhouse database. Clickhouse is designed for a large analytics system and allows us to perform x50-150 faster queries compared toPostgres.

For the backend API, we used Vercel Function written in Go. SQL queries are generated dynamically based on user filters and events selected by a user.

Frontend app is written in Typescript with Next.js framework and hosted on Vercel.